One API to access GPT-5.2, Claude, Gemini, DeepSeek, Llama, Grok & more with compare, blend, judge, and failover orchestration modes.

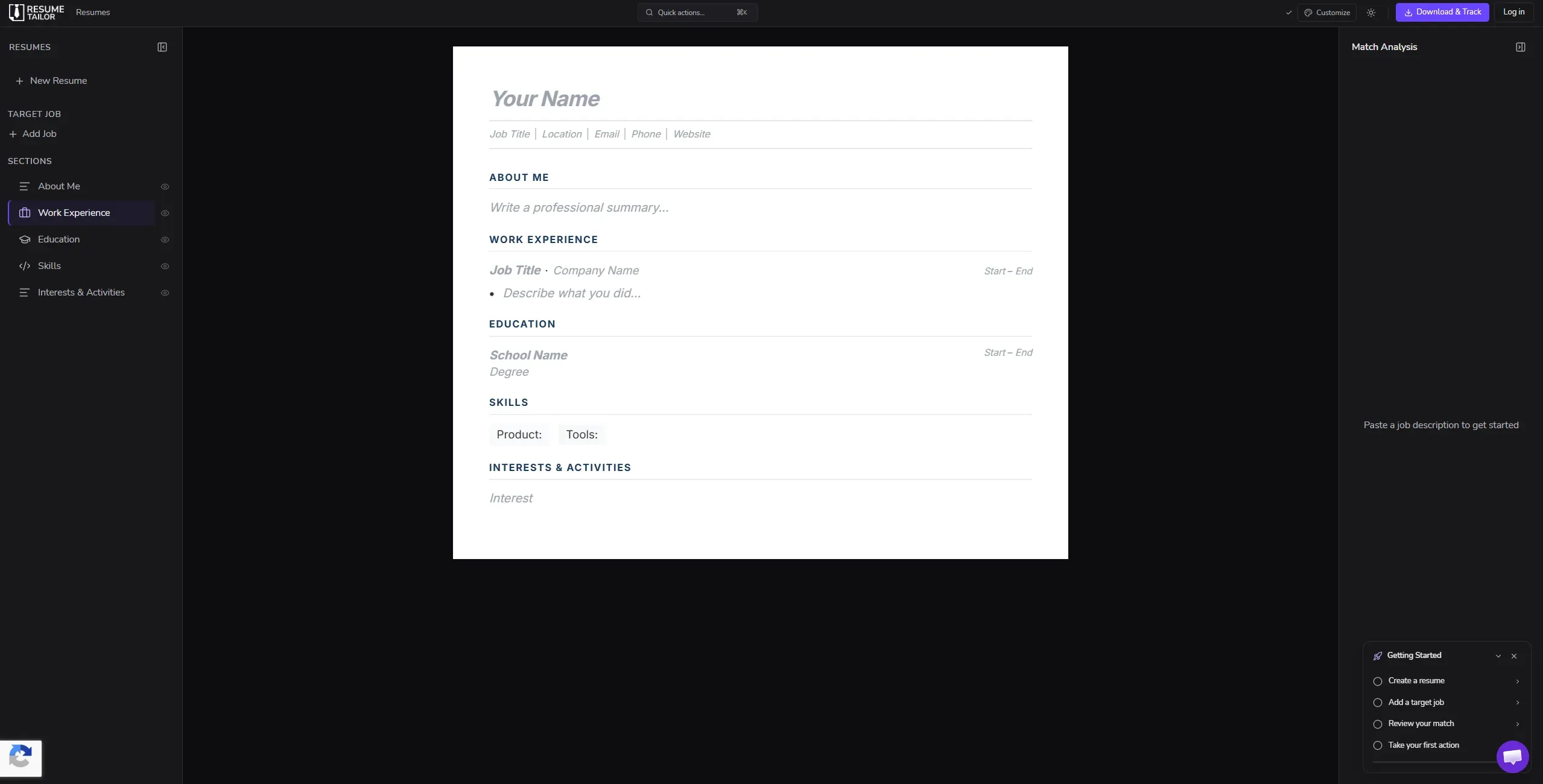

AI-powered resume tailoring tool that optimizes resumes for specific job descriptions in 30 seconds with ATS scoring.

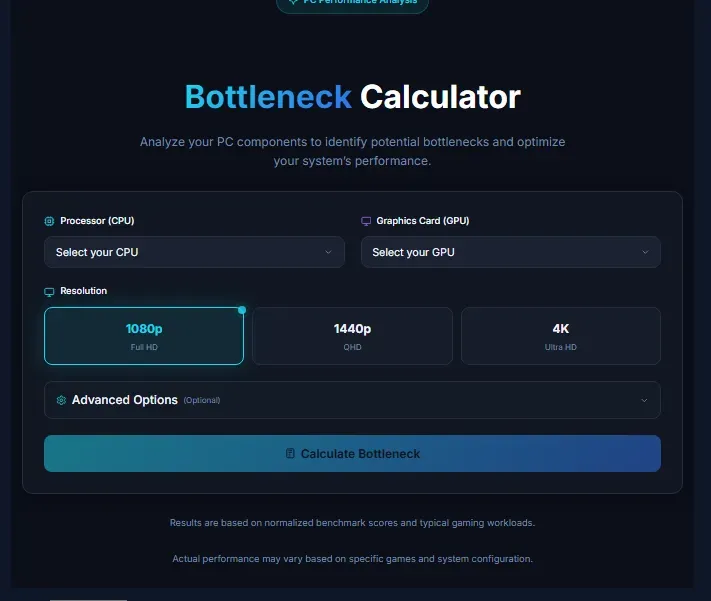

Analyze your PC components to identify potential bottlenecks and optimize your system’s performance.

Sponsored

CoveragePush.com

Get featured on 500+ high-authority publications. Boost your brand visibility and domain authority.

Sponsored

Testimly.com

Send one link to your customers. Get video and text reviews on autopilot.

Sponsored

supastarter.dev

The Next.js boilerplate to build production-ready SaaS apps fast.

Submit your website to get discovered by thousands of potential customers and boost your SEO.

Get ListedLLMWise is a multi-model LLM API platform that provides unified access to over 24 AI models from 13 providers through a single API key. It enables developers to compare outputs side-by-side, blend responses from multiple models, implement AI-judged routing, and set up automatic failover chains.

Key features include:

The platform targets developers, startups, and enterprises building AI-powered applications who need reliable, cost-effective access to multiple LLMs without managing separate subscriptions.